Markov Chains - University of Washington

924 CHAPTER17 Markov Chains ter the coin has been flipped for the tth time and the chosen ball has been painted.The state at any time may be described by the vector [urb], where uis the number of un-painted balls in the urn, is the number of red balls in the urn, and r …

Tags:

Information

Domain:

Source:

Link to this page:

Please notify us if you found a problem with this document:

Documents from same domain

Working a difference quotient involving a square root

sites.math.washington.eduWorking a difference quotient involving a square root Suppose f(x) = p x and suppose we want to simplify the differnce quotient f(x+h) f(x) h as much as possible (say, to eliminate the h …

Square, Working, Differences, Involving, Quotient, Working a difference quotient involving a square

THE COMPLEX EXPONENTIAL FUNCTION

sites.math.washington.eduTHE COMPLEX EXPONENTIAL FUNCTION (These notes assume you are already familiar with the basic properties of complex numbers.) We make the following de nition ... we will rst verify the following form of the Law of Exponents: ei 1+i 2 = ei 1 ei 2 (2) To prove this we rst expand the right-hand side of (1) by rst multiplying out the

Functions, Properties, Complex, Exponential, Exponent, Of exponents, The complex exponential function

GALOIS THEORY - University of Washington

sites.math.washington.eduGALOIS THEORY We will assume on this handout that is an algebraically closed eld. This means that every irreducible polynomial in [x] is of degree 1. Suppose that F is a sub eld of and that Kis a nite extension of Fcontained in . For example, we can take = C, the eld

Galois Field in Cryptography - sites.math.washington.edu

sites.math.washington.eduGalois Field in Cryptography Christoforus Juan Benvenuto May 31, 2012 Abstract This paper introduces the basics of Galois Field as well as its im-

STURM-LIOUVILLE BOUNDARY VALUE PROBLEMS

sites.math.washington.eduSTURM-LIOUVILLE BOUNDARY VALUE PROBLEMS Throughout, we let [a;b] be a bounded interval in R. C2([a;b]) denotes the space of functions with derivatives of second order continuous up to

Value, Problem, Boundary, Sturm, Liouville, Sturm liouville boundary value problems

ZZ dA R [0 2] R - University of Washington

sites.math.washington.eduMath 126C 2nd Midterm Solutions Spring 2014 3 (8points) Computetheequationofthetangentlinetothecurve r =1+2sinθ atthe pointwhereθ =π/6. Giveyouranswerinexactform.

Precalculus - University of Washington

sites.math.washington.eduPrecalculus David H. Collingwood Department of Mathematics University of Washington K. David Prince Minority Science and Engineering Program College of Engineering

Precalculus - University of Washington

sites.math.washington.eduiv of study per week (outside class) is a more typical estimate. In other words, for many students, this course is the equivalent of a half-time job!

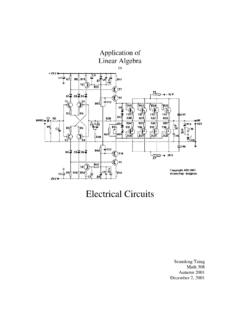

Electrical Circuits - University of Washington

sites.math.washington.eduLinear Algebra in Electrical Circuits Perhaps one of the most apparent uses of linear algebra is that which is used in Electrical Engineering. As most students of mathematics have encountered, when the

Some Remarks on Writing Mathematical Proofs

sites.math.washington.eduSome Remarks on Writing Mathematical Proofs John M. Lee University of Washington Mathematics Department Writingmathematicalproofsis,inmanyways,unlikeanyotherkindofwriting.

Writing, Proof, Some, Marker, Mathematical, Some remarks on writing mathematical proofs

Related documents

Math 312 Lecture Notes Markov Chains - Colgate University

math.colgate.eduMath 312 Lecture Notes Markov Chains Warren Weckesser Department of Mathematics Colgate University Updated, 30 April 2005 Markov Chains A ( nite) Markov chain is a process with a nite number of states (or outcomes, or events) in which

CS 547 Lecture 35: Markov Chains and Queues

pages.cs.wisc.eduContinuous Time Markov Chains Our previous examples focused on discrete time Markov chains with a finite number of states. Queueing models, by contrast, may have an infinite number of states (because the buffer may contain any number of ... which are treated the same as any other transition in a Markov …

Key words. AMS subject classifications.

langvillea.people.cofc.eduMarkov chains in the new domain of communication systems, processing “symbol by symbol” [30] as Markov was the first to do. However, Shannon went beyond Markov’s work with his information theory application. Shannon used Markov chains not solely

CS 547 Lecture 34: Markov Chains

pages.cs.wisc.eduCS 547 Lecture 34: Markov Chains Daniel Myers State Transition Models A Markov chain is a model consisting of a group of states and specified transitions between the states. Older texts on queueing theory prefer to derive most of their results using Markov models, as opposed to the mean

On the Markov Chain Central Limit Theorem - Statistics

users.stat.umn.eduOn the Markov Chain Central Limit Theorem Galin L. Jones School of Statistics University of Minnesota Minneapolis, MN, USA galin@stat.umn.edu Abstract The goal of this paper is to describe conditions which guarantee a central limit theorem for functionals of general state space Markov chains. This is done with a view towards Markov

Chain, Central, Limits, Theorem, Markov, Markov chain, The markov chain central limit theorem

An introduction to Markov chains - web.math.ku.dk

web.math.ku.dkpects of the theory for time-homogeneous Markov chains in discrete and continuous time on finite or countable state spaces. The back bone of this work is the collection of examples and exer-

4. Markov Chains - Statistics

dept.stat.lsa.umich.eduExample: physical systems.If the state space contains the masses, velocities and accelerations of particles subject to Newton’s laws of mechanics, the system in Markovian (but not random!)

Markov Chains (Part 2) - University of Washington

courses.washington.eduGeneral Markov Chains • For a general Markov chain with states 0,1,…,M, the n-step transition from i to j means the process goes from i to j in n time steps

University, Chain, Part, Washington, University of washington, Part 2, Markov, Markov chain

Markov Chains - University of Washington

courses.washington.eduMarkov Chains - 5 Stochastic Processes • Suppose now we take a series of observations of that random variable, X 0, X 1, X 2,… • A stochastic process is an indexed collection of random

University, Chain, Washington, University of washington, Markov, Markov chain

MARKOV CHAINS: BASIC THEORY - University of Chicago

galton.uchicago.eduMARKOV CHAINS: BASIC THEORY 3 Definition 2. A nonnegative matrix is a matrix with nonnegative entries. A stochastic matrix is a square nonnegative matrix all of whose row sums are 1. A substochastic matrix is a square nonnegative matrix all of whose row sums are 1.

![ZZ dA R [0 2] R - University of Washington](/cache/preview/6/4/c/c/9/f/d/6/thumb-64cc9fd6ac93e2995c04a2a74b217176.jpg)